CS:GO Ignition ESports - Part 2

CS:GO Data Used to Drive Singular and Unreal Engine Graphics

During Part 1 of this blog we saw how live match data from CS:GO was ingested by Ignition and a bespoke database was populated and visualised. In this second part we’ll go on to show how we’ve used this data to drive multiple graphical outputs on different platforms. How do we do that? With Ignition:

Ignition

Not only is Ignition great for handling, processing, storing and presenting data, it is also great at driving that data to graphics renderers. Our pluggable connectors model makes Ignition completely renderer agnostic – one system to drive them all! No swapping between different proprietary systems to drive different technology here.

Our aim with this CS:GO system was to showcase Ignition’s powerful capabilities, so we decided to drive two different graphics systems simultaneously:

Singular

Singular are making waves right now by offering HTML graphic overlays in a flexible, scalable way – you can build these graphics using their intuitive web interface, then either control them manually from their own web based control system, or through a data integration from a third party system – in steps Ignition! All our complex CS:GO data that has been collected and stored in Ignition can now be directly driven to HTML graphics written in Singular.

Integrating Data

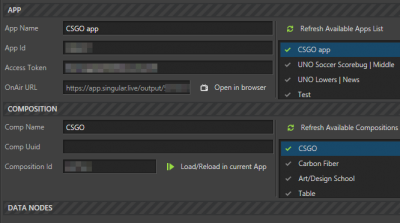

Data is bound to graphical elements then a json payload is defined. Our Ignition platform then has a fully featured Singular integration that lets us discover what graphics are available and select which ones we want to load onto our overlay.

Graphics

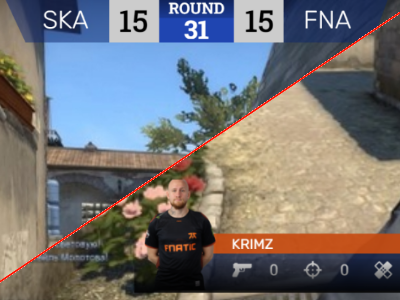

In this example we created a simple scoreline graphic that will appear over a live CS:GO game feed to keep the audience up to date with the current state of play. We also created a stats graphic, showing live statistics for a chosen player. This can even be linked to whichever the active player is in the match via the CS:GO API.

HTML Output

Singular provides a simple url as the output of it’s overlay scenes. This can then be introduced at a suitable point in your production pipeline, we chose vMix as a low cost option for our demo but any technology that can overlay web pages onto feeds will be able to support Singular’s output.

Unreal Engine

We chose Unreal Engine as our virtual set renderer because of it’s disruptive presence at the forefront of modern broadcasting technologies. With many of the traditional rendering technologies choosing to support Unreal Engine for their sets, and also so many newer offerings that harness the power of Unreal, it’s definitely the future of the broadcast industry.

Blueprints

We used our Main Controller pattern to get full virtual set automation and control from Ignition into Unreal Engine. We expose each graphic in our set (i.e. each object sitting in our set that we would like to drive from Ignition – be it populating it with data, animating it in/out, triggering a set piece etc.) via a Sub Controller component blueprint. Our Main Controller blueprint then searches our project for any of these sub controllers and marshalls them from a json payload sent from Ignition to achieve the desired state of the virtual set. For a deep dive into this pattern, see our blog on Simplifying Complex Graphics

Set Graphics

In our virtual set we decided to model the desks that each team would be sitting at. Each desk has space for 5 players, and a screen for each player at the front of the desk, reflecting that player’s live health during the game. We also chose to have some large floating scores appear at the back of the set, updating as either team wins a round, as well as some large team logos behind each desk.

Foreground Graphics

We also decided to build a foreground stats graphic that could be animated in and out at the front of the set, appearing keyed in front of the talent

NDI Out

We decided to create an Unreal Blueprint representing a virtual NDI camera. This inherited from the existing NDI camera plugin that has been created by NewTek and made available as part of their Unreal Engine NDI Plugin. We added the ability to control this camera as one of the set’s sub controllers. This is done by positioning a number of Unreal Cinematic cameras at specific points around our set, then storing those cameras as a list of addressable positions for our NDI Camera. The NDI Camera can then be animated to one of these named positions, or cut directly to that position. With the power of NDI, the output of this camera is now accessible by any NDI capable device on the network, and guess what - Ignition is NDI capable, meaning we can capture a live preview of our virtual camera within our control application itself – how’s that for instant feedback?

NDI Bridge

With the arrival of NDI 5, an exciting new feature has become available that we thought we would test out for this integration: NDI Bridge. This lets you share NDI streams across the web – no longer do you have to be attached to the same internal network to access your NDI feeds. This opens up great possibilities, especially in the cloud production space – you Unreal Engine renderers can be spun up in the cloud and stream their outputs directly to where they’re needed without on-site render boxes.

In Summary...

We hope you’ve enjoyed delving a little deeper into this project, we think we’ve come up with a compelling implementation that shows off a number of things:

Ignition’s data crunching power – ingest feeds from any source fast, and display and store it in a meaningful way that’s easy to browse, search and visualise.

Ignition’s flexibility for graphic control – drive graphics from any renderer from one simple to use interface

We’re Unreal Engine experts – we’re excited about new technology and Unreal Engine is definitely the future! Get in touch if you need help navigating this new landscape.

Singular is great for graphics overlays – if you need help driving or building your singular graphics, we’ve got you covered

Idonix – we’re great at what we do! – this implementation took no time at all to spin up and look great. If you need help wrangling data, controlling graphics of any flavour, or any other part of your live production, get in touch!

Think we could be a good fit for your next project?

We promise not to share your details with anyone else - take a look at our privacy policy.